H.264, also known as Advanced Video Coding (AVC), even though born almost twenty years ago, still stays the mainstream in the era of multiple video codecs co-existing where we can also see like HEVC/H.265, VP9, AV1, and the emerging H.266/VVC. Today, we'll make a deep review of this codec from a contemporary perspective including how it came up, how it works, where it is now, and where it is going.

Part 1. What Is H.264/AVC (Advanced Video Coding)

It is impossible or extremely hard to record, store, or transmit a video without compression for it has a huge amount of data to process. But there are too many methods to achieve this which may make it a mess. Thus, video codec standardization comes to enable different software and hardware manufacturers to work under general video compressing rules, for example, H.26x from VCEG, VC-1 from the Society of Motion Picture and Television Engineers (SMPTE), and VPx from Google.

H.264, one of the video coding standards, is co-developed by ITU-T VCEG (Video Coding Experts Group) and MPEG (Moving Picture Experts Group) from ISO/IEC JTC1. The partnership effort is also known as the JVT (Joint Video Team) Project. And that's why this standard is often referred to as MPEG-4 Part 10, Advanced Video Coding, or MPEG-4 AVC, which clearly illustrates both sides of its developers.

H.264 was created in 2003 and has made great improvements in compressing efficiency and coding algorithm by inter-pictures prediction and motion compensation tech. Videos (1080p and 4K) compressed by H.264 are relatively easier to save, compress, and deliver via the network. And it is the most accepted codec implemented in almost all multimedia industries including DVD/BD, HDTV, YouTube video, live streaming, broadcasting, video recording, etc.

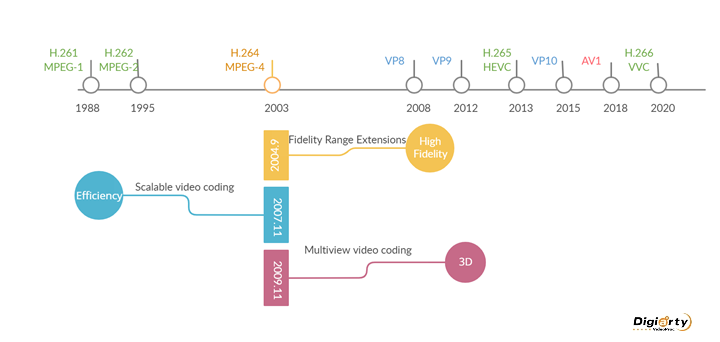

Part 2. The Evolution of H.264 AVC Video Codec

When we take a look at the history of H.264, we'll find that it wasn't born with the talent of compressing all videos at a high compression ratio. It was extended to add various additional enhanced features in the following years including support for high fidelity and 3D videos, and improvement of coding efficiency.[1]

Part 3. The Advantages of H.264 AVC Codec

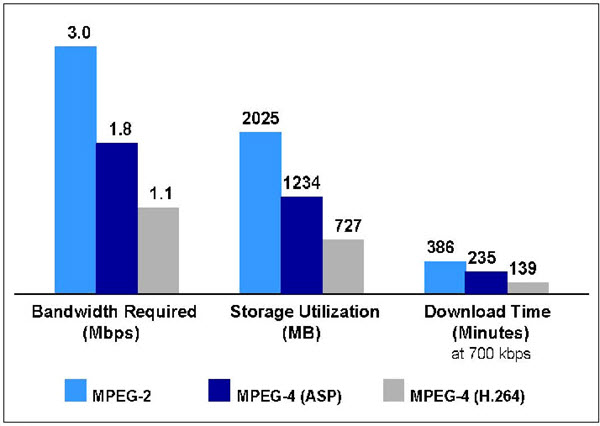

The video compression efficiency and algorithm of H.264 have been surpassed by its successors. But it is a great leap in the development of H.26x codec families. The main improvements are interblock transform, motion estimation, in-loop deblocking filter, and entropy coding. And how does H.264 benefit from them?

- H.264 has a high-performance video coding design to save up to 50% bitrate compared to MPEG-2, and 80% to Motion JPEG video.

- It supports high definition videos including 1080p and 4K @60fps.

- H.264 is the most popular video codec in a wide array of software, hardware, and networks and is better accepted than H.265/HEVC.

- H.264 accommodates a wide variety of bandwidth requirements and supports unfriendly network conditions.

Part 4. How H.264/AVC Works

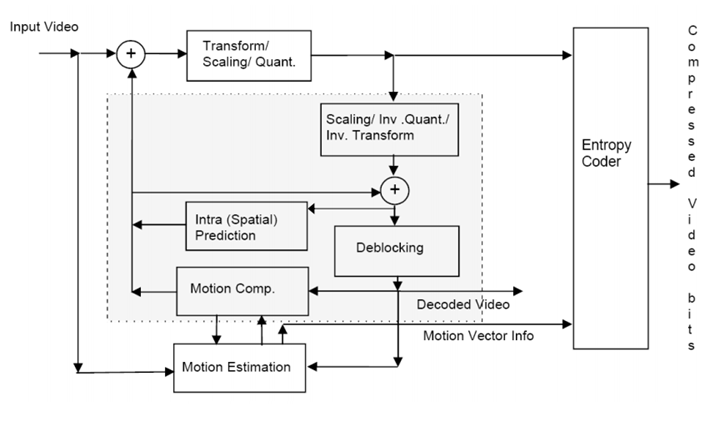

H.264 follows the general process of previous video coding algorithm, and it also achieved technical innovations in some parts. The whole process of H.264 coding includes estimation, transformation, quantization, deblocking, and entropy coding.

1. Inter and Intra Prediction

The eternal purpose of video coding is to improve efficiency and save up bit rate as much as possible. H.264 is a leap forward in this field than its predecessors. It removes temporal redundancy by inter prediction and spatial redundancy by intra prediction.

H.264 utilizes motion reference modes in inter prediction. For instance, instead of recording all frames of a ball moving against the background, H.264 keeps the entire information of just several frames. As for the rest frames, H.264 only keeps the changed info of the motion projects since the background stays the same.

- I-frame contains the complete information of the image, it is coded independently of other non-I-frame pictures.

- P-frame contains differences relative to preceding frames.

- B-frame contains differences relative to both preceding and following frames.

- GOP (Group of Pictures) is made of I, P, B frames starting with I frame. A typical structure could be IBBPBBPBBI or IBBPBBPBBPBBI. The more frames between I-frames, the longer the GOP.

Inter prediction has already been applied in previous H.26x series and MPEG-x series standards though not as efficiencient as H.264, while intra prediction is the advanced feature of H.264. It is to predict image data from a micro perspective. Each frame is divided into pixel blocks of which are called macroblocks. And intra prediction is to predict the luminance and color components of blocks by referring to the surrounding and previously-codec pixels within the current image.

The intra prediction methods of H.264 are pretty flexible. It supports 9 directional prediction modes and 4 macroblock partitions (16x16, 16x8, 8x16, and 8x8). When processing images without complicated color and luminance changes, it'll divide macroblocks into sub-macroblocks of 8x8, 4x8, 8x4, and 4x4.

- Motion estimation: Frames are departed into macroblocks. The process of motion estimation is to search and find out the best matching of pixel blocks in the inter frame.

- Motion compensation: It is a process of subtracting an inter prediction from the current macroblock after motion estimation.

2. Transformation and Quantization

Although inter and intro prediction removes large amounts of redundant data, the residual data can be further modified. Then we come to the next step, transformation. It aims at converting the motion compensated residual data into another domain. The transform used in this video coding standard is computationally flexible, which requires low memory and a low number of arithmetic operations. DCT (Discrete Cosine Transform) is one of the best methods to work at H.264. It is a revisable calculation method and applies the main condition of the transformation process.

The data after transforming is a set of coefficients. Quantization is to reduce the range of transformation coefficients and mapping them to specific ranges because human beings are more sensitive to lower frequency signals than higher ones. The goal of quantization is to compress high-frequency signals for better visual results.

3. Deblocking filter

Since H.264/AVC is block-oriented, the compressed video is composed of square blocks that might result in severe artifacts. Its adaptive in-loop deblocking filter comes to smooth the edges between pixel blocks. This filter is adaptive on three levels – slice level, block edge level, and sample level. It can make a considerable difference in visual quality while saving bitrate about 6-9%.

4. Entropy Coding

Entropy coding is a lossless data compression scheme to convert image data into bitstreams that can be stored and transmitted. It includes not only the complete video sequence, but also quantized transform coefficients, compressed data structure, and information about the prediction, etc.

The entropy coding algorithm used in H.264 is CABAC (Context-adaptive binary arithmetic coding), which is notable for providing much better compression than most others. It is one of the crucial elements that make H.264/AVC much better than its predecessors.

To play an H.264 video is to decode it in a reverse process of encoding. The video decoder receives the bitstream, then decodes and extracts info from elements above, finally reconstructs a sequence of images.

Part 5. How Does H.264/AVC Compare with Other Codecs

1. H.264 VS MPEG-2

MPEG-2 (H.262) is a codec standard to define a combination of lossy video and audio data compression method. It was released in 1995, and surely it is not as efficient as its next generation codec, H.264. To compress a 480P DVD video, H.264 can provide the same quality at about half the bitrate of MPEG-2. As for 1920x1080 video content, MPEG- 2 runs at the bitrate of 12-20 Mbps.

MEPG-2 is widely used in over-the-air digital television broadcasting, DVD and SD digital industries. While H.264 can deliver better video quality and be applied to more applications and systems, mainly for HD video streaming.

2. H.264 VS H.263

H.263 is designed by the ITU-T Video Coding Experts Group in 1995. It is primarily known as a low-bit-rate video codec for video conferencing. Before H.264, much of the streaming content available over the internet was based on H.263 coding scheme. H.263+ and H.263++ are its subsequent version. Later on, H.264 was adopted by the ITU as the successor to it for these video conferencing scenarios.

Because H.264 provides much higher quality across the entire bandwidth spectrum, H.263 now has been replaced and considered a legacy codec. Most new videoconferencing products now support H.264, H.263, H.261 and newer standard formats say H.265, RTV (Real-Time Video codec developed by Microsoft), and VP8/9.

3. H.264 VS Window Media 9

Windows Media 9 (accurately called Windows Media Encoder 9) is a free media encoder created by Microsoft. It was developed to convert audio, video, and computer screen images to Windows Media formats for live and on-demand distribution. Windows Media 9 is developed by a single company for narrow uses, while H.264 is a video codec standard ratified by worldwide experts from many industry segments. And video quality tests from Apple shows that H.264 delivers superior video quality when compared with Windows Media 9.

With the removal of Windows Media DRM in the Windows 10 Anniversary Update, Windows Media Encoder 9 is no longer supported by the current version of Windows since May 2017.

4. H.264 VS Pixlet

Pixlet is a video codec developed by Apple in 2003 at the request of animation company Pixar and only available on Mac OS X 10.13 and later. Pixlet and H.264 are developed for different uses. The former was focused on video editing instead of distribution. Without interframe compression, it plays a video frame by frame so that artists can scrutinize every detail of the sequence. Thus, it requires high-end hardware for the professional playback process.

While H.264 is a delivery codec, optimized for high quality and efficiency. Pixlet may require about 40 Mbps for 1920x1080 content, H.264 delivers the same content at about 8 Mbps. This efficiency in H.264 enables video delivery and playback on a wide range of devices, including HDTVs, computers, smartphones, and beyond.

5. H.264 VS H.265/HEVC

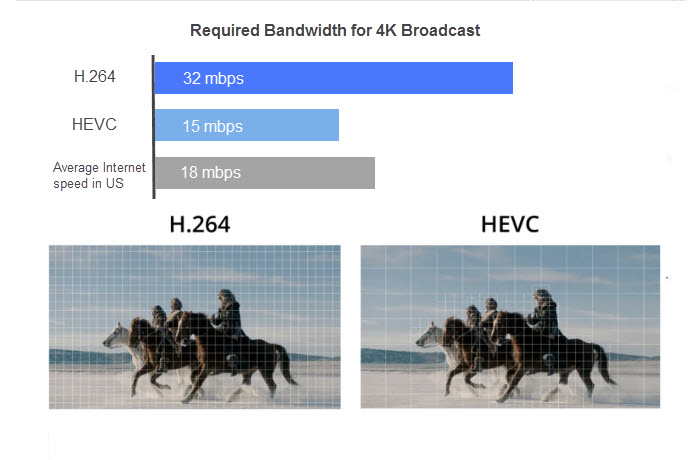

Ten years after the birth of H.264, a newer and more efficient compression standard, H.265/HEVC (High Efficiency Video Coding) was standardized on April 13th, 2013. Compared to H.264, it provides up to 50% better data compression at the same video quality or substantially improved video quality at the same bit rate. [2]

H.264 supports videos up to 4K (4092x2160), while H.265 is developed for future resolutions up to UHD 8K (8192x4320) as well as Dolby Vision, HDR, and 360 videos. Some newer devices are starting to ship with a built-in hardware decoder to play H.265 videos. As of 2019, H.265 is used by over 43% of developers (just second to H.264) and it is still on the rise in 2020. But the enhanced video quality and reduced bandwidth come at a cost. H.265 encoding and decoding ask for almost 10x more computing power over H.264.

At present, H.264 has one more younger sibling, H.266/VVC, as well as newer competitors (AV1 and VP9). Read the comprehensive comparison: H.264 vs Newer Codecs

Part 6. H.264/AVC and WebRTC

H.264/AVC largely shrinks media files and makes videos distribute quickly over the internet so that we can watch YouTube videos, Netflix series, and Twitch lives at any time and anywhere. But before 2013, enabling real-time video natively on the web was not this easy. Because H.264 is a patented video codec, and anyone or company that wants to use this compression technology needs to pay a large bill to MPEG-LA, the provider of this codec license.

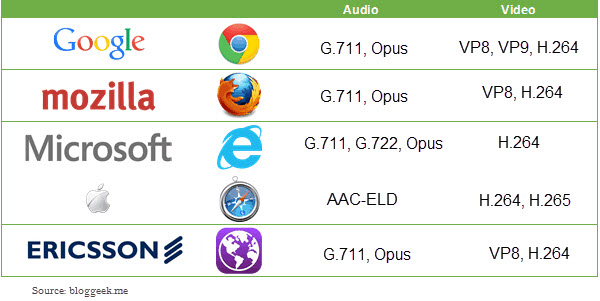

In 2013, Cisco released open-source H.264 to removes barriers to WebRTC under the standard made by IETF (Internet Engineering Task Force) and W3C (World Wide Web Consortium). It provides the source code and a binary module that can be downloaded from the Internet for free. Moreover, Cisco did not pass on its MPEG LA licensing costs for this module so to achieve effective collaboration of H.264 and WebRTC. And H.264 still has its place in WebRTC at the moment.

WebRTC (Web Real-Time Communications) is an open-source project created in 2011 by Google to enable P2P video, audio, and data transfers in web browsers (Chrome, Firefox, Edge, and Safari) and mobile apps.

Cisco has enabled the industry to move forward on WebRTC and made that technology broadly available to developers, users, and vendors in the world. In the same year, Mozilla announced to utilize this module and brought real-time H.264 support in their browser Firefox on most operating systems. In the following year, Ericsson released a WebRTC-based browser with the support of openH264. Google used to drop H.264 codec from Chrome browser in 2011. But later, it gets the support back in Chrome 50.

The Benefits of H.264 Being Supported in WebRTC

It enables interoperability with most video-capable mobile devices and browsers, which is great for industry acceptance. You can use it for P2P video calls, and integrate audio, video, and text communication within a web or mobile application in real-time.

But H.264 is not the only game in town. More open source video codecs are created like VP8, VP9, and AV1, which are twice efficient as H.264 and expected to be adopted in more online services.

Part 7. Frequently Asked Questions about H.264/AVC

1. Why is H.264 included in QuickTime 7?

The International Organization for Standardization selected the QuickTime file format as the basis for MPEG-4. And in return, QuickTime embraced open standards and built H.264 into its media architecture just as the same way as other QuickTime codecs.

The primary reason was that AVC delivered the best video quality at that time while at a much lower data rate than its peers. Another reason for H.264 availability in it was that, many companies around the world had announced to use this coding scheme to interoperate with each other. And Apple was planning to join this trend and take the lead in the market of H.264 content creation and playback.

2. Will application developers be able to access H.264 via QuickTime APIs?

Of course yes. Apple promised to build H.264 into QuickTime media architecture just like other QuickTime video codecs. So developers can use QuickTime APIs to add H.264 encoding and decoding capabilities to their programs. For example, SlideShare, and many other programs that utilize the QuickTime architecture can use the H.264 support in QuickTime 7 and higher versions.

3. Does H.264 require special hardware?

This is a question that should have been asked 10 years ago. As for now, modern devices surely meet its hardware requirements as it is a universal standard codec in our digital life. And an Internet-sized content at 40kbps - 300kbps runs on the most basic of processors, like those in smartphones and consumer-level computers.

4. What is the relationship between H.264 and the new standards for HD DVDs?

The new standards for HD DVDs include H.264 after it was released. Now 3 codecs can be used in HD DVDs – VC1, H.264/MPEG-4 AVC, and H.262/MPEG-2 Part 2.

5. Is H.264 the same as MP4?

As mentioned above, H.264 is a video coding standard that is used to define the coding scheme of a video. And MP4 usually refers to a video format or format container. MP4 and most other video formats (MOV, AVCHD, MKV, AVI, FLV, etc.) support H.264 video codec.

6. Is H.264 good for YouTube?

YouTube officially claims that the best video formats is MP4 with H.264 video codec and AAC audio codec. But H.264 was mainly developed to efficient on bandwidth and storage. So you have to deal with the video bitrate carefully to reduce quality loss as much as possible.

7. In which industries does H.264 play a role?

H.264 standard is flexible enough to be applied to a wide range of applications, networks, devices, and systems. And it provides integrated support for both video transmission and storage. You might or might noticed that it has been adopted in all most all multi-media fields:

- HD DVDs and Blu-ray discs.

- Terrestrial broadcasting, direct broadcast satellite TV, and IPTV broadcasting.

- Video conferencing.

- Live streaming on applications and browsers.

- Online video distribution on YouTube, Facebook, Twitter, etc.

- IP video surveillance. IP security cameras compressed videos with H.264 codec.

- post-editing for videos. It is a lossy compression codec that puts less pressure on editing software and hardware.

8. What is the relationship between x264, H26L, or MPEG-4, and H.264?

x264: An open source encoder developed by VideoLAN for encoding video streams into H.264 coding format.

H.26L: A coding standard invented between H.263 and H.264 but failed to achieve 50% bitrate reduction compared to H.263.

MEPG-4: A method of defining compression of both video and audio data. It consists of several standards (parts). And H.264 (aka. MPEG-4 Part-10) is one of them and used to define video compression.

Part 8. The Future of H.264

Will H.264 be replaced by newer codecs? This is a question we'll ask every time new codecs come up but never settled down.

Some thought it might be replaced by HEVC. But as for the year 2020, AVC still has its place. And Plex still only transcodes to H.264/AVC (no matter from what source format). In retrospect, plenty of video codecs are knocked out, such as H.263 and H.26L. But MPEG-2 is still playing a role in the DVD and BD industries, which indicates that a codec that marks a significant milestone in video delivery could last longer. At least now H.264 still performs excellently in combining compatibility, coding efficiency, and video quality.

And it is reasonable to predict that when codecs with a higher compression ratio went viral on every application and device, the battlefield would turn to the patent pool. Some experts assert that royalty-free codecs are more promising, like VP9 and AV1, and they are great challenges to MPEG codecs. But, as some talents have developed open source encoders (x.264, JM, etc.), H.264 is not strictly limited to IP. Just wait and see whether it can survive in the next video codec revolution.

Reference

[1] Advanced Video Coding Wikipedia

[2]H.264/AVC vs H.265/HEVC bit rate test - BBC R&D's video coding research team